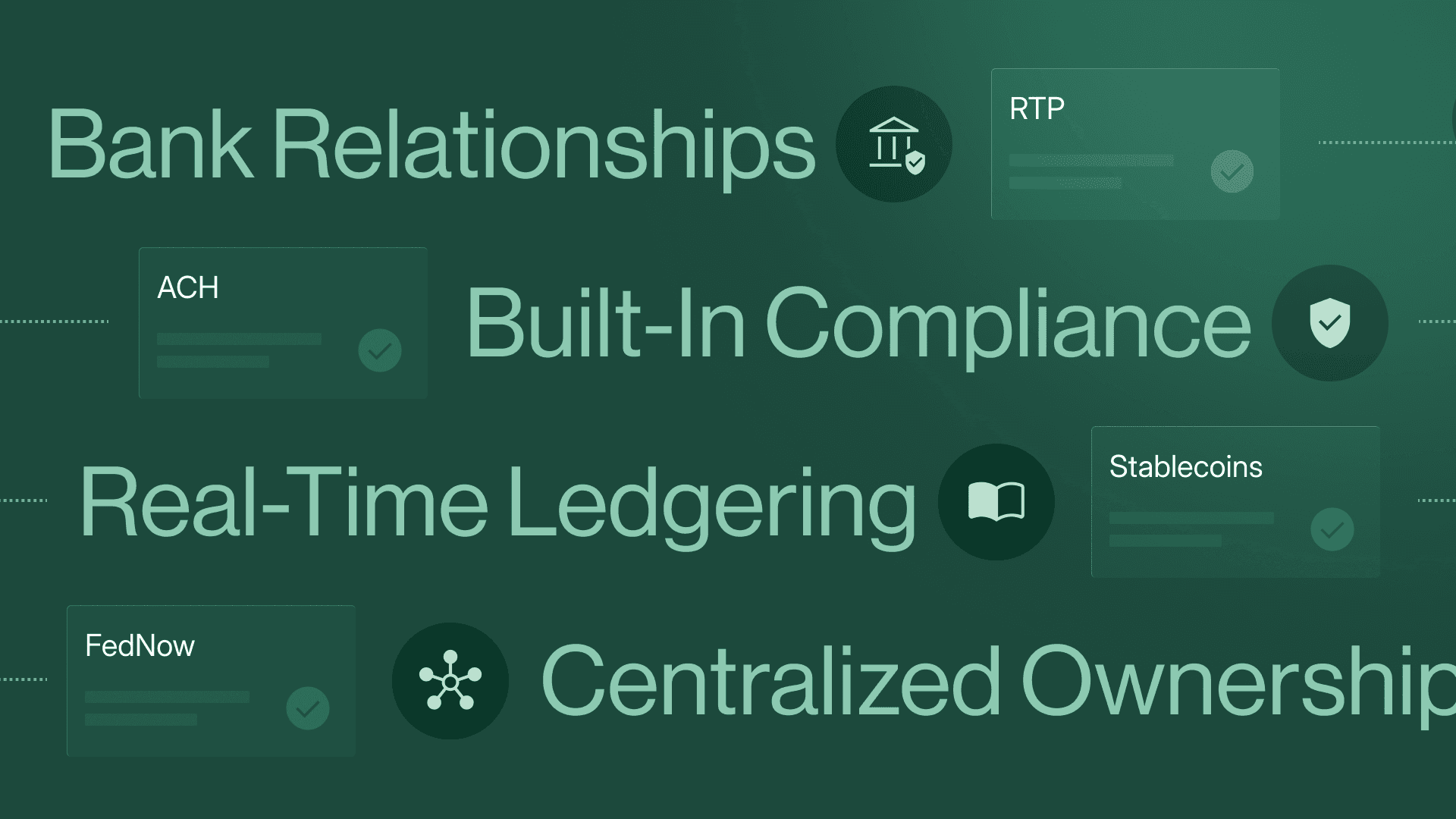

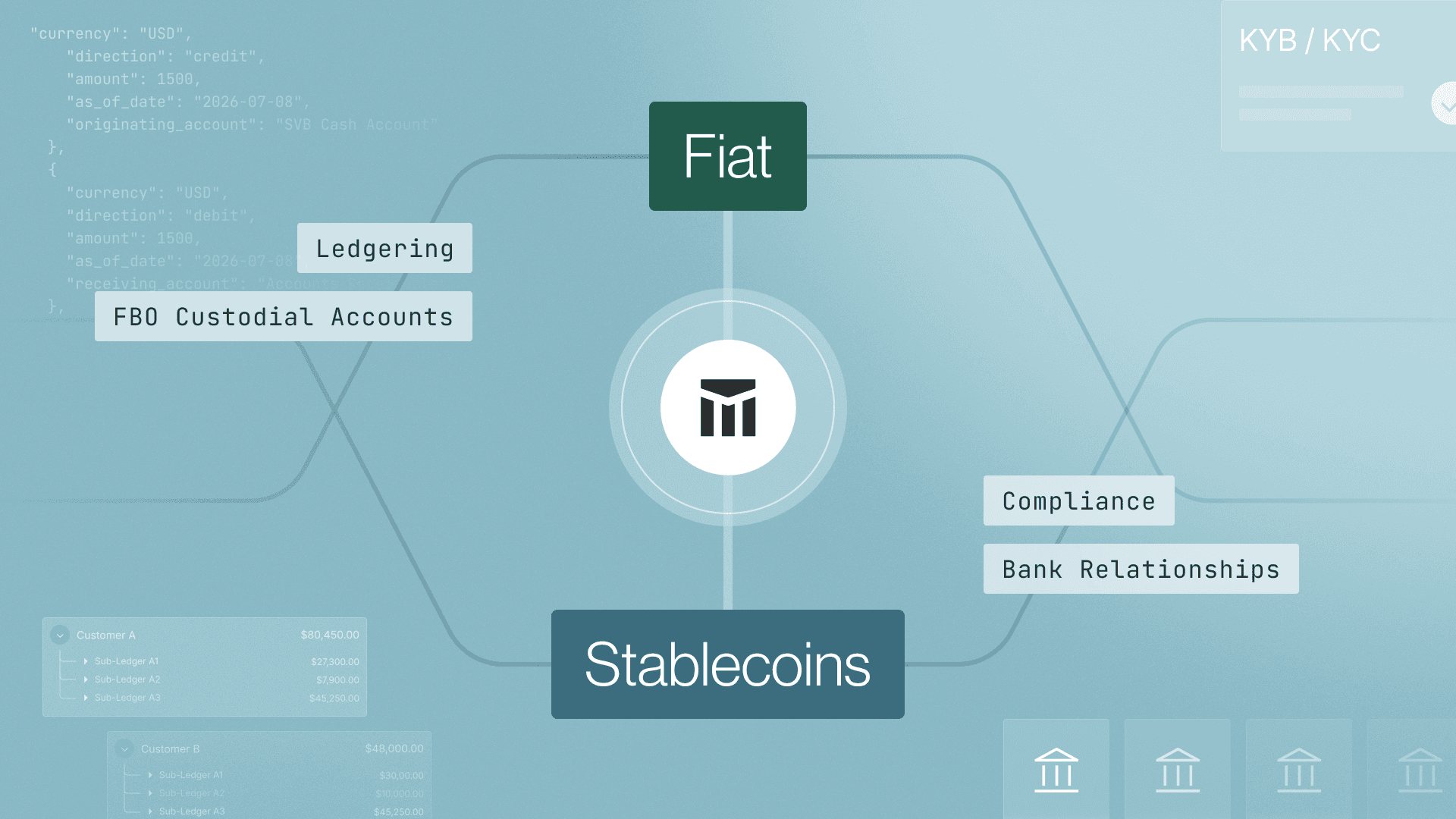

Introducing Modern Treasury Payments. Built to move money across fiat and stablecoins. Learn more →

How to Scale a Ledger, Part V: Immutability and Double-Entry

In the fifth part of our series, we examine two of our four ledger guarantees, like immutability and double-entry, and how the API can provide them.

Contents

Explore with AI

This post is the fifth chapter of a broader technical paper, How to Scale a Ledger. Here’s what we’ve covered so far:

- Part I: Why you should use a ledger database

- Part 2: How to map financial events to double entry primitives

- Part 3: How a transaction model enables atomic money movement

- Part 4: How ledgers support recording and authorizing

In this chapter, we’ll dig deeper into ledger guarantees of immutability and double-entry.

Immutability: Reconstructing Every Ledger State

Guarantee: Every state of the ledger must be recorded and can be easily reconstructed.

Immutability is the most important guarantee from a ledger. You may be wondering how immutability can be guaranteed if ledgers allow mutable fields:

- Accounts: The balances change as Entries are written to them.

- Transactions: The state can change from pending to either posted or archived. While the Transaction is pending, the Entries can change too.

- Entries: Entries can be discarded.

The Immutable Log Underneath

These mutable fields on our core data model help the ledger match real world money movement. But ultimately, the data model is built on top of an immutable, append-only log. All changes are preserved, and any previous state can be reconstructed through: This log can be queried in a few different ways to reconstruct past states through:

effective_attimestampsaccount_versionnumberstransaction versionfields

Querying Past States

We’ve already covered how effective_at allows us to see what the balance on an Account was at an earlier time. We can also see the Entries that were present on an Account at a timestamp, since no Entries are ever actually deleted. The Entries on Account account_a at effective time timestamp_a can be fetched by filtering:

This logic ensures consistent job reads, even if Entries arrive late or out of order.

Using Versions for Precision

As we’ve seen before, timestamps may not be sufficient to allow clients to query all past states in ledgers that are operating at scale:

- Entries can be written at any point in the past using the

effective_attimestamp - Entries may share the same

effective_atordiscarded_attimestamp

Ledgers should solve the drawbacks of timestamps by introducing versions on the main data models: Accounts and Transactions. Account versions that are persisted on Entries allow us to query exactly which Entries correspond to a given Account balance.

Since the Entries on a Transaction can be modified until the Transaction is in the posted state, we need to preserve past versions of a Transaction to be able to fully reconstruct past states of the ledger. The Ledger API stores all past states of the ledger with another field on Transaction:

Transactions report their current version, and past versions of the Transaction can be retrieved by specifying a version when querying.

Past Transaction versions are used when trying to reconstruct the history of a particular event that involves multiple changing Accounts.

Example: A Bill-Splitting App

Let’s consider a bill-splitting app. A pending Transaction represents the bill when it’s first created, and users can add themselves to subsequent versions of the bill before it is posted:

Version 0: Chuck adds himself to the bill. Since he is the only party to the bill, the entry shows him paying for the whole amount.

Version 1: Dani wants to split costs. Now the bill amount is split between entries on Chuck’s account and Dani’s account.

Version 2: Elio is the final addition to split the bill three ways.

Version 3: The bill payment is posted on each account.

Audit Logs

We’ve recorded past states of the main ledger data models, but we haven’t recorded who made the changes. As ledgers scale to more product use cases, it’s common for the ledger to be modified by multiple API keys and by internal users through an admin UI. We recommend an audit log to live alongside the ledger to record that kind of information.

Our Ledgers API audit logs contain these fields to show what was changed, by whom, and when.

Double-Entry: Preventing the Creation or Destruction of Funds

Guarantee: All money movement must record the source and destination of funds.

Once a ledger is immutable, the next priority is to make sure money can’t be created or destroyed. We do this by validating that every Transaction has:

- At least two entries, one debit and one credit

- Debits and credits equal each other, per currency

We’ll focus just on the implementation details here—if you want a primer on how to use double-entry accounting, check out our guide, Accounting For Developers.

Why Currency-Level Balancing Matters

Double-entry accounting must be enforced at the application layer. When a client requests to create a Transaction, the application validates that the sum of the Entries with direction: credit equals the sum of the Entries with direction: debit. But what happens when a Transaction contains Entries from Accounts with different currencies?

Imagine a platform that allows its users to purchase and manage cryptocurrency. Let’s say Freya buys 1 ETH and the platform wants to represent that purchase as an atomic Transaction.

Here’s one way to structure that:

This Transaction involves four accounts, two for Freya (one in USD and one in ETH, credit-normal), and two for the platform (one in USD and one in ETH, debit-normal). This is a valid Transaction, and the credit and debit Entries still match, using the original summing method.

However, the original method breaks down when the Entries across currencies match, but the Transaction isn’t balanced. Here’s an example:

Even though 1+1=2, this Transaction is crediting a fraction of ETH (created out of nowhere), and Freya was debited $1 (which disappeared).

The correct validation is to group Entries by currency and validate that credits and debits for each currency match. You might think that because Freya spends $4,586.51 to buy one ETH, we could implement currency conversion Transactions with just two entries—one for USD, and one for ETH—and that they could balance based on the exchange rate of USD to ETH. There are three main issues with that implementation:

- Because currency exchange rates fluctuate over time, it would be difficult to verify that the ledger was balanced in the past.

- There isn’t a universally agreed-upon exchange rate for all currencies. It would be very difficult for the platform and the ledger to agree that a Transaction is balanced.

- Having just two Accounts doesn’t match the reality of how currency conversion is performed. To allow Freya to convert dollars to ETH, the platform must have an account of ETH from which to disburse the crypto and a pool of dollars in which to place Freya’s money. That process will always involve at least four Accounts.

Next Steps

This is the fifth chapter of a broader technical paper with a more comprehensive overview of the importance of solid ledgering and the amount of investment it takes to get right. You now understand two of the guarantees that make a ledger scalable: immutability and double-entry. If you want to explore implementation details or see how Modern Treasury simplifies all of the above, read our docs or get in touch.

Read the rest of the series:

Get the latest articles, guides, and insights delivered to your inbox.

Authors

Matt McNierney serves as Engineering Manager for the Ledgers product at Modern Treasury, and is frequent contributor to Modern Treasury’s technical community. Prior to this role, Matt was an Engineer at Square. Matt holds a B.A. in Computer Science from Dartmouth College.