Most PSPs weren't built for stablecoins. Hear from our founders on what they learned building one from scratch. Join us live May 21st →

Why Use a Managed Ledger

In this article, we discuss the benefits and drawbacks of using an external ledger service.

Contents

Explore With AI

Introduction

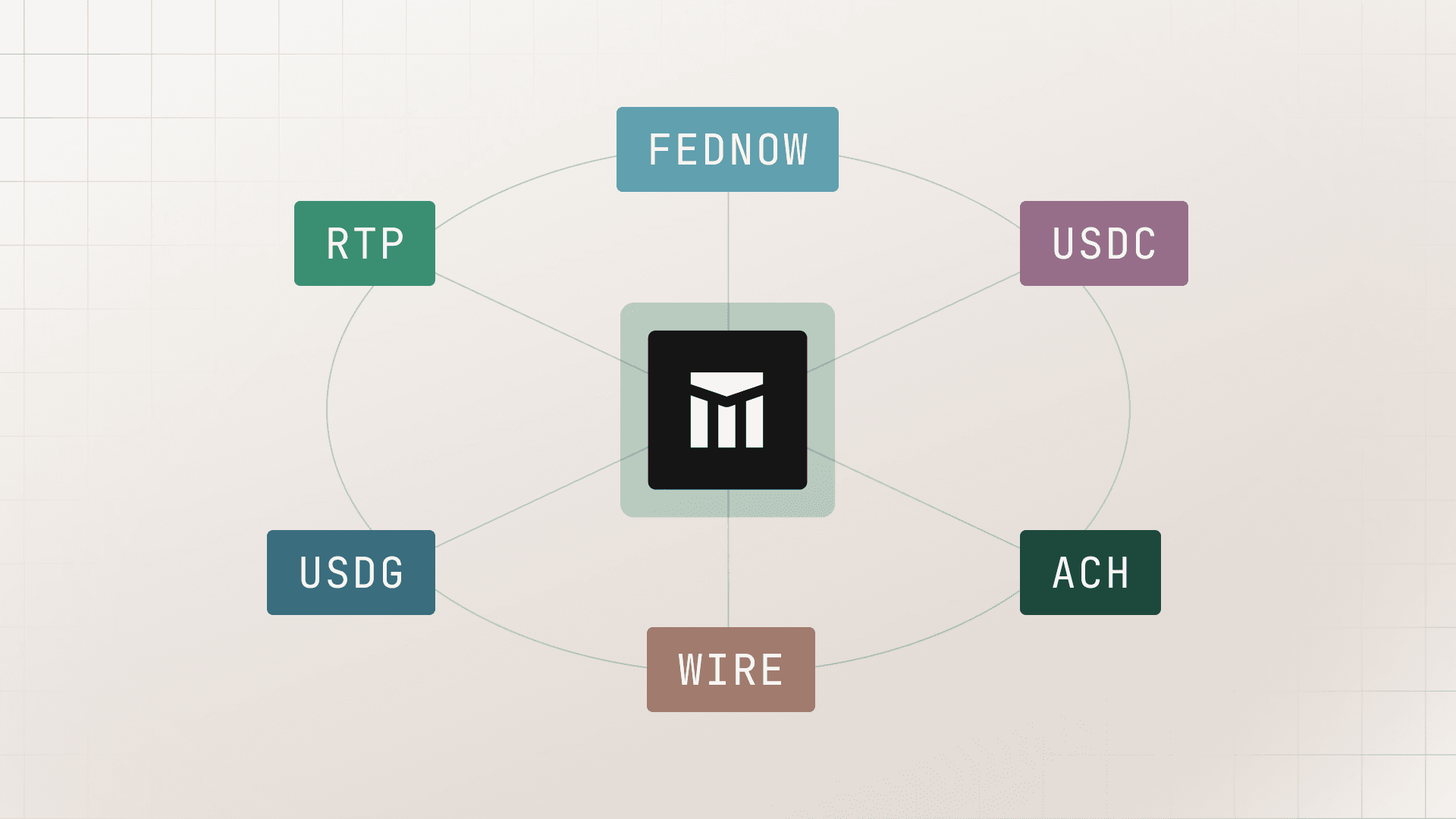

We launched Ledgers in 2021 because we saw many of our customers looking to come up with the right patterns to record their money movement. In the world of complex payment operations (i.e., fintechs, marketplaces, vertical SaaS adding fintech services, and Fortune 2000 companies with complex payment processes), integrating with a bank or payment service to move money is just the start. Companies often underestimate the complexity of recording that money movement—which we call ledgering—which is why we set out to solve this problem.

As we’ve onboarded dozens of businesses onto Ledgers, we’ve noticed that for many companies, building a ledger on top of an existing in-house database is still the default behavior. This usually takes the form of a patchwork of systems, as we’ll show below. We’ve seen many of these companies think through the tradeoffs of building in-house versus using an external service. Often, our customers’ developers, engineering managers, and PMs have an “aha moment” when they realize the many benefits of outsourcing.

We believe that companies of all sizes will eventually run all their payment operations on dedicated infrastructure, similar to the shift to cloud computing. In this article, we’ve outlined the benefits our customers have realized from following this path. Our goal is to share what we’ve learned in an effort to help you make the right decision for your business, even if it means using another external ledger provider. We start with a refresher on what a ledger database is and what we mean by it.

What is a Ledger Database?

A ledger is a log of business events that have a monetary impact, captured in double-entry format. By abstracting balance mutations, purchases, payouts, interest calculations, wallet withdrawals, fees, and the myriad of other payment ops stuff you need to track as a simple entry on a double-entry database, you can simplify your work. No more balances and payouts tables on Postgres; an application ledger database abstracts all of this into two classes of objects: accounts and transactions.

A (well-designed) ledger should:

- Act as a central data store divorced from your underlying fund flow structure (i.e., bank account setup, your application data models, or the data and the money you hold on a third-party system like Stripe);

- Depict transactions in a double-entry format, as it is the millennia-old standard for tracking financial data;

- Handle scale (as opposed to your ERP and accounting system, which is also double-entry, but best used for historical reporting, not real-time data handling).

With these definitions in place, let’s walk through the reasons why outsourcing your ledger is a good idea.

Why Outsource Your Ledger

Let’s assume the following is true:

- Your business is buying from an infrastructure company (with entire teams of engineers dedicated to keeping ledger infrastructure running),

- Such support from engineers is baked into the cost, and,

- This infrastructure company and said engineers have a track record of success.

Under these assumptions, building a ledger in-house almost always ends up being more risky, expensive, and complex than buying.

Complexity: the hidden cost of building a central source of truth

At its core, building a ledger is a challenge in three forms: it’s an accounting challenge, a computer science challenge, and a data coordination challenge:

The accounting challenge

Building double-entry logic in a database correctly is a non-trivial technical task. As we wrote in accounting for developers, building a double-entry ledger entails creating a translation layer between business events and accounting entries. The alternative is to not use double-entry at all, which leads to all sorts of issues, as made public (and we refer back to them, again) in the examples of Uber, Square, and Airbnb.

Assuming double-entry is desired, you’re better off having the support of a trusted partner in thinking through the transaction handling logic. Most ledger providers will work with you on this (we do). Having a partner to learn best practices from accelerates the time to go live, assures scalability, and prevents downstream issues in accuracy.

The computer science challenge

As we covered in how and why homegrown ledgers break, single-entry ledger databases break down when they are too opinionated or when systems of checks and balances start to fail. At a more fundamental level, however, scaling a double-entry database is hard.

Does your system accept eventually consistent balance aggregations? Or does your use case require some level of immediacy on balance updates? How do you deal with the inherent limitations of the database you use? What kind of concurrency controls do you need to ensure the ledger is consistent at scale? How do you handle versioning, locking, and partitioning?

These are not trivial issues, and a good vendor tends to have answered all of these questions with dedicated infrastructure teams.

The data coordination opportunity

Finally, it’s our belief that a good ledger should act as a central source of truth. A flexible ledger is crucial in a world where (1) fintech stacks are bloated, (2) transaction data is sprawled in payment systems, card issuing APIs, embedded lending providers, etc., and (3) ERPs are good for reporting but simply not performant enough to inform your product. Instead of figuring out how to parse logic by yourself, a partner can help—ideally with both direct integrations and guidance.

Cost: how buying is actually cheaper over time

To illustrate our point, we plotted a list of features we believe are important in a ledgering system. From the top, we have the foundational features: the ledger itself, immutability, idempotency, etc. The further down the list, the more these requirements are dependent on your use case.

* The cost assumes $250k / year all-in engineering salaries, assuming $197,204 average total compensation for an engineer in San Francisco and baking in fringe costs such as benefits and taxes paid by the employer.

Many developers see the task of setting up tables of SQL as trivial. But enforcing double-entry at the database level requires you to organize the database around three core objects: accounts, transactions, and entries. Accounts need to have constraints that enforce account normality. Transactions need to accommodate two or more entries, and each entry should include a direction property that defines whether they are credits or debits.

On top of this, you need to create rules that will assure credits = debits on a transaction level. You also need to ensure the ledger features an append-only design (for the sake of immutability), and you need to create idempotency controls to ensure duplicate transactions are properly handled. The list goes on: these are database challenges that take a team of two engineers four to five months—or about $200k—to get right.

From here, you need to build for scale. Parsing transactions simultaneously, ensuring high throughput, low latency, and high availability, and implementing locking—these are all guardrails to be built in any scaling company. Building and testing this thoughtfully could take a team of two engineers another four to five months. Because of the nature of this problem, the mythical man-month principle applies here: more engineers don’t necessarily make this faster.

At scale, you may also need to build your use case. For example, you may want to have transactions take a status property, so they can be pending before they become posted. Or the ability to fetch balances as of specific dates. Or perhaps a fully featured UI linked to your ledger with SSO and RBAC your ops and finance team can use. This is at least six months of engineering time for a team of two. And this is not counting the indeterminate amount of work around building a push to warehouse, ERP, or integration with other systems.

Finally, as you grow, you will inevitably deal with scaling challenges. As we showcased in how and why ledgers break, more often than not, initial implementation designs fail overtime, consistency is compromised by scale, refactors become necessary, and as a result, your cost x time graph ends up looking like this:

Instead of like this:

Risk: the value of keeping your data with a trusted third party

The risk of using an external provider usually takes one of four forms:

- Performance risk (your vendor not performing according to SLAs);

- Data security risk (your vendor losing access to, leaking or being vulnerable to attacks on very important data);

- Vendor risk (the chance of your provider running out of business);

- Lock-in risk (the inability for you to move vendors if necessary).

On the flip side, the risks of building in-house are:

- Human error/performance risk (your team not building well the first time due to lack of expertise or competing priorities);

- Timeline/scope creep (building the right thing but in a way that takes way too long and that costs more money);

- Technical debt (building the wrong thing or building the right thing in the wrong way).

We believe that a trustworthy third party can minimize vendor risks while eliminating the risks of building in-house. At Modern Treasury, for instance, we have ambitious SLIs around availability, latency, and throughput. We implemented SSO, SOC I and II compliance, RBAC, data encryption in transit (TLS 1.2) and at rest (AES-256-GCM), IDS, ingress and egress filtering, and more, to minimize the likelihood of security breaches. Working with some of the largest fintechs and marketplaces in the world (ClassPass and TripActions, for example) means our APIs are battle tested. Finally, we don’t believe in locking you in unnecessarily, so your data can always be exported to your data warehouse of choice.

Some vendors provide similar features; others don’t—what’s important is that you do your diligence in the process. But working with a trustworthy vendor means you are putting the three risks of building in-house (human error, scope creep, lack of guidance) under the scrutiny of a partner who has done this hundreds of times.

The end result: faster infra → faster products → better companies, out of the box

For many companies, all of this translates into faster product velocity: launching a new product faster because you will neither have to build—nor maintain—a double-entry ledger service. Faster time to market means quicker revenue and lower burn. But beyond the initial launch, outsourcing this undifferentiated part of your stack means peace of mind. Having engineers focused on your ledger at all times takes time away from your product. And just like your cloud provider, as your vendor works to continuously improve their infrastructure, your infrastructure gets better, too.

Next Steps

All in all, if you do decide to outsource your ledger, we present a good option. What matters is that you make the best decision for your business. If you are interested in learning more, or simply have feedback on any of these points, take a look at our Ledgers product, and reach out to us here anytime.

Get the latest articles, guides, and insights delivered to your inbox.

Authors

Lucas Rocha is the PM on the Ledgers product, driving strategy for the company’s database for money movement. Before Modern Treasury, Lucas worked in VC at JetBlue Technology Ventures and Unshackled Ventures. He earned his MBA from Harvard Business School and his bachelor’s degree from Northeastern University.